Blended Learning

Digital Workshop

An immersive, gamified team workshop bridging foundational instruction and certification-level performance.

Scroll down for a Storyline visual walkthrough sample.

Click below to see a storyboard sample for this project:

Audience

Client teams participating in a multi-phase analytics certification program

Tools Used

Articulate Storyline 360, Microsoft Word, PowerPoint, Asana

My Contribution

I served as instructional design lead on this project, driving all content strategy, scenario design, gamification architecture, visual direction, and learner flow. Working directly in Storyline 360 alongside a developer throughout the build, I directed interaction design, made content-level edits within the file, and collaborated closely on the technical execution of the experience.

Note: Original materials are masked due to company confidentiality. All visuals shown are masked mock-ups.

The Challenge

The organization needed a way to bridge foundational analytics instruction with real-world performance. Existing training covered concepts, but learners lacked a structured opportunity to apply those concepts under realistic conditions — the kind of hands-on practice that prepares people to perform, not just recall.

The program also needed to support a live, facilitated environment with multiple learners working simultaneously, requiring interaction design that could accommodate team collaboration, facilitator oversight, and individual accountability at the same time.

The Solution

I designed the practical application component of a three-part certification program:

Instructor-Led Training (ILT): Foundational, facilitator-led sessions to build baseline knowledge (designed by broader team)

Practical Application Component (my role): An immersive, gamified digital workshop where learners applied analytics skills to real-world reporting challenges inside a cohesive themed environment

Competency Assessments: Formal assessments to validate mastery and award certification

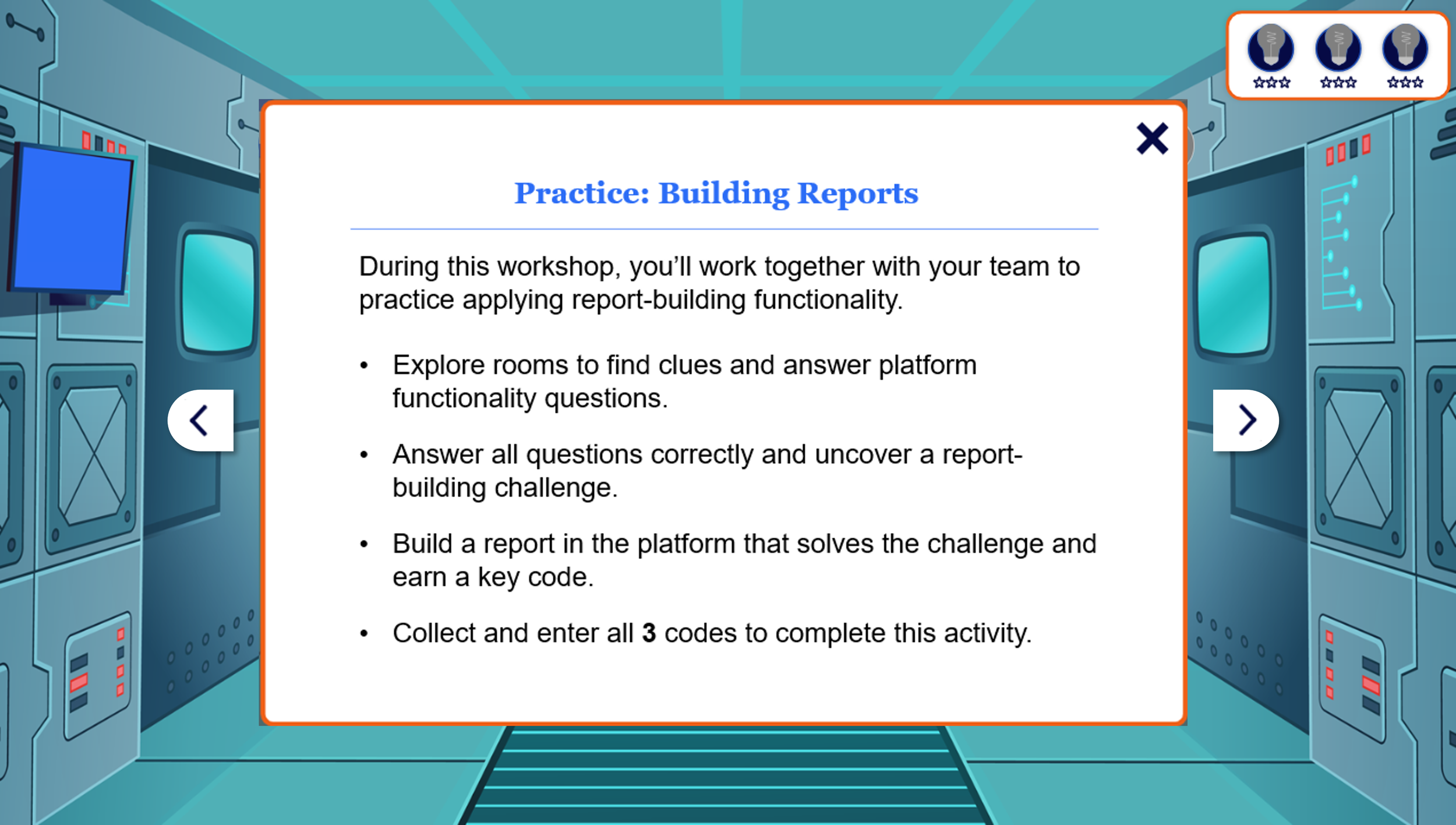

The workshop was structured as a mission narrative: learners were stationed at a lunar analytics hub tasked with uncovering the competitive landscape of the galactic market. Three report-building challenges stood between them and mission completion — each requiring team collaboration, facilitator verification, and a code entry in the Control Room to advance. Initial pilot results indicated a 92% learner satisfaction rating for engagement and applicability.

Storyline Sample: Visual Walkthrough

Click the arrows to view a sample of masked screen captures of the actual practical component build in Storyline360.

My Process

Gamification Architecture

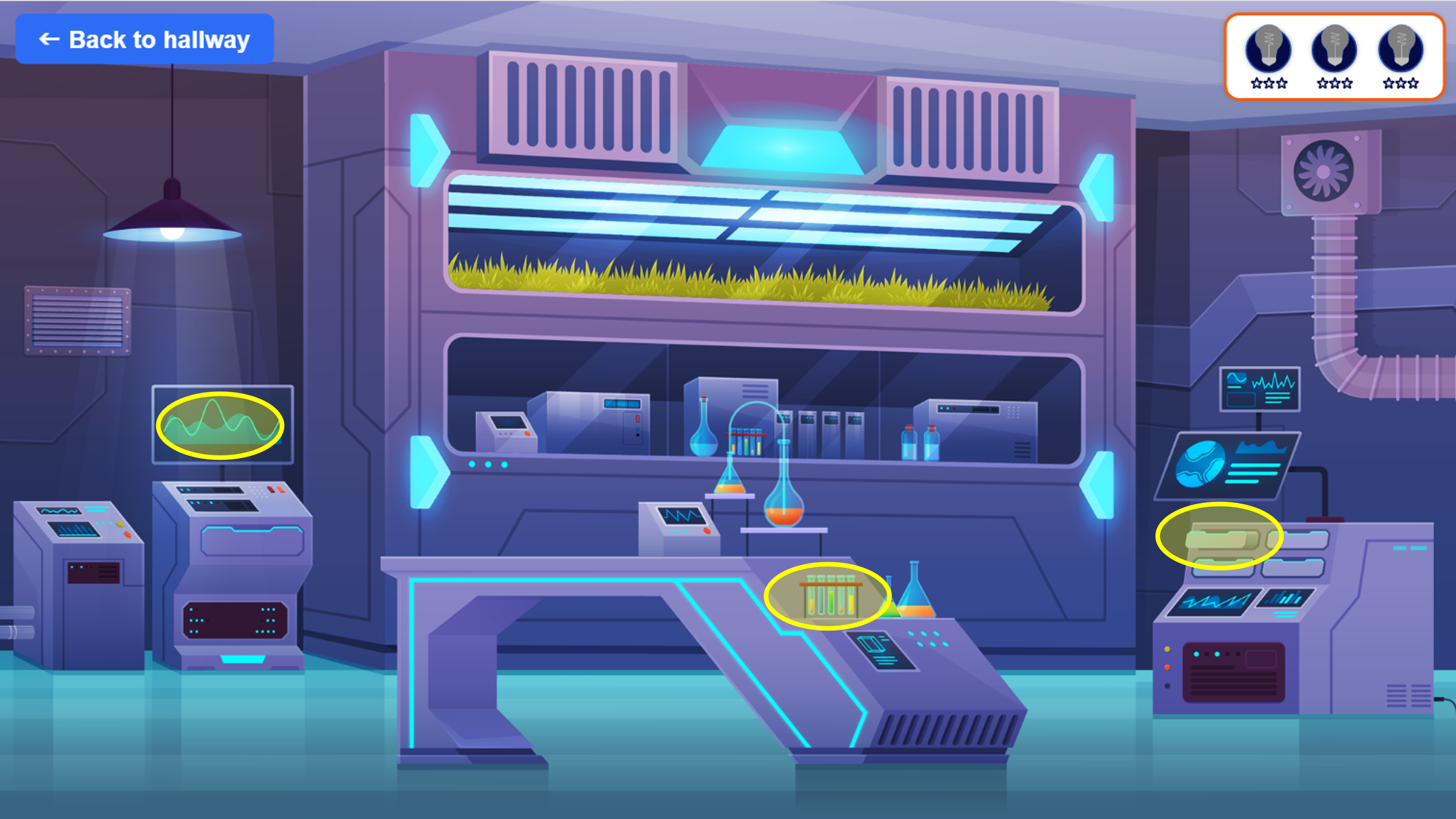

The experience was built around a mission narrative: learners were stationed at a lunar analytics hub and tasked with unlocking a comprehensive view of the galactic market by solving three report-building challenges. To progress, teams explored three distinct rooms, found hidden clues, answered knowledge checks collaboratively, and earned stars toward uncovering each challenge.

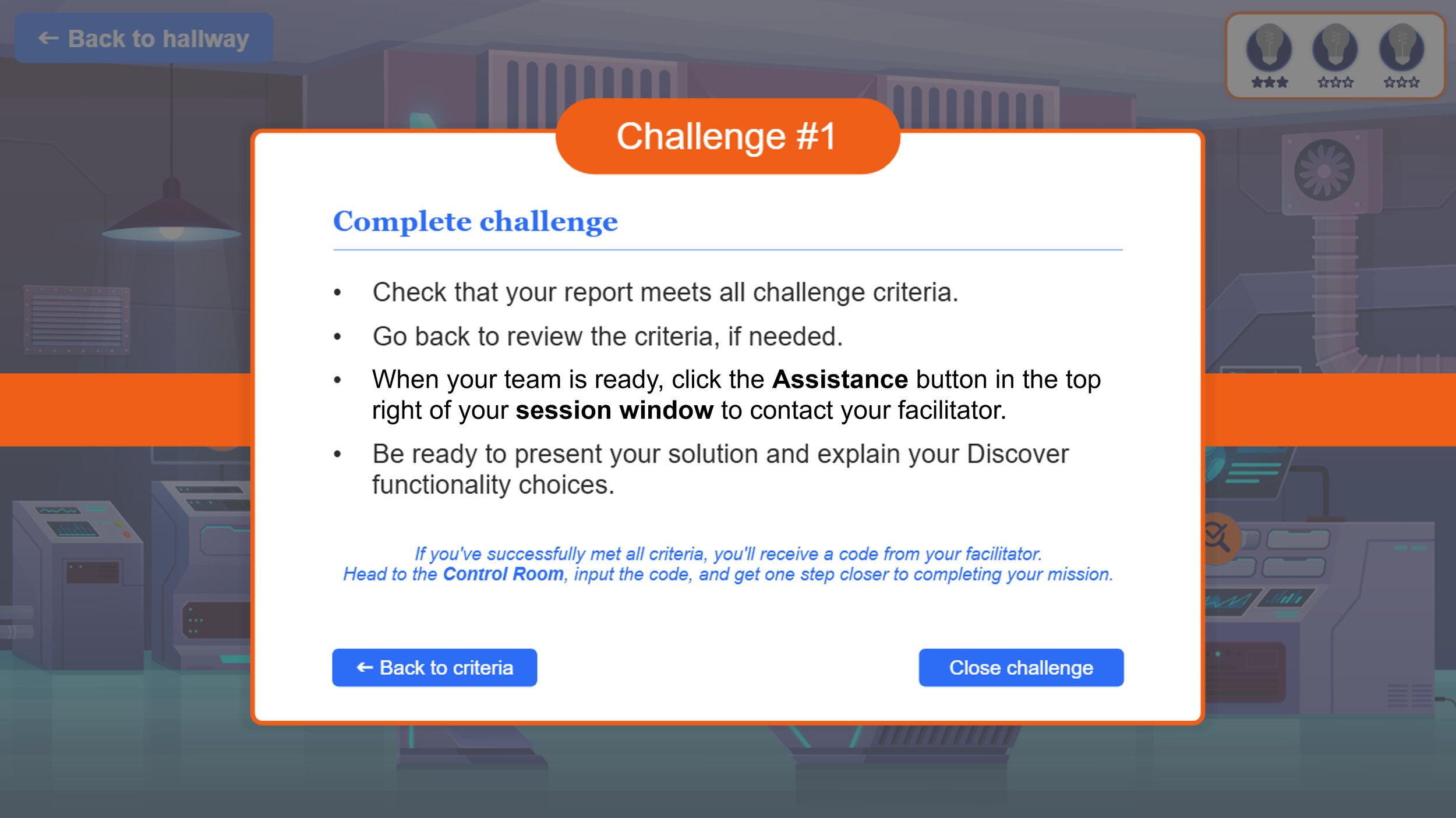

A control room served as the final destination where teams entered facilitator-issued codes after completing each report-building task — creating a real-world consequence tied to in-module performance.

Learning Theory and Model Integration

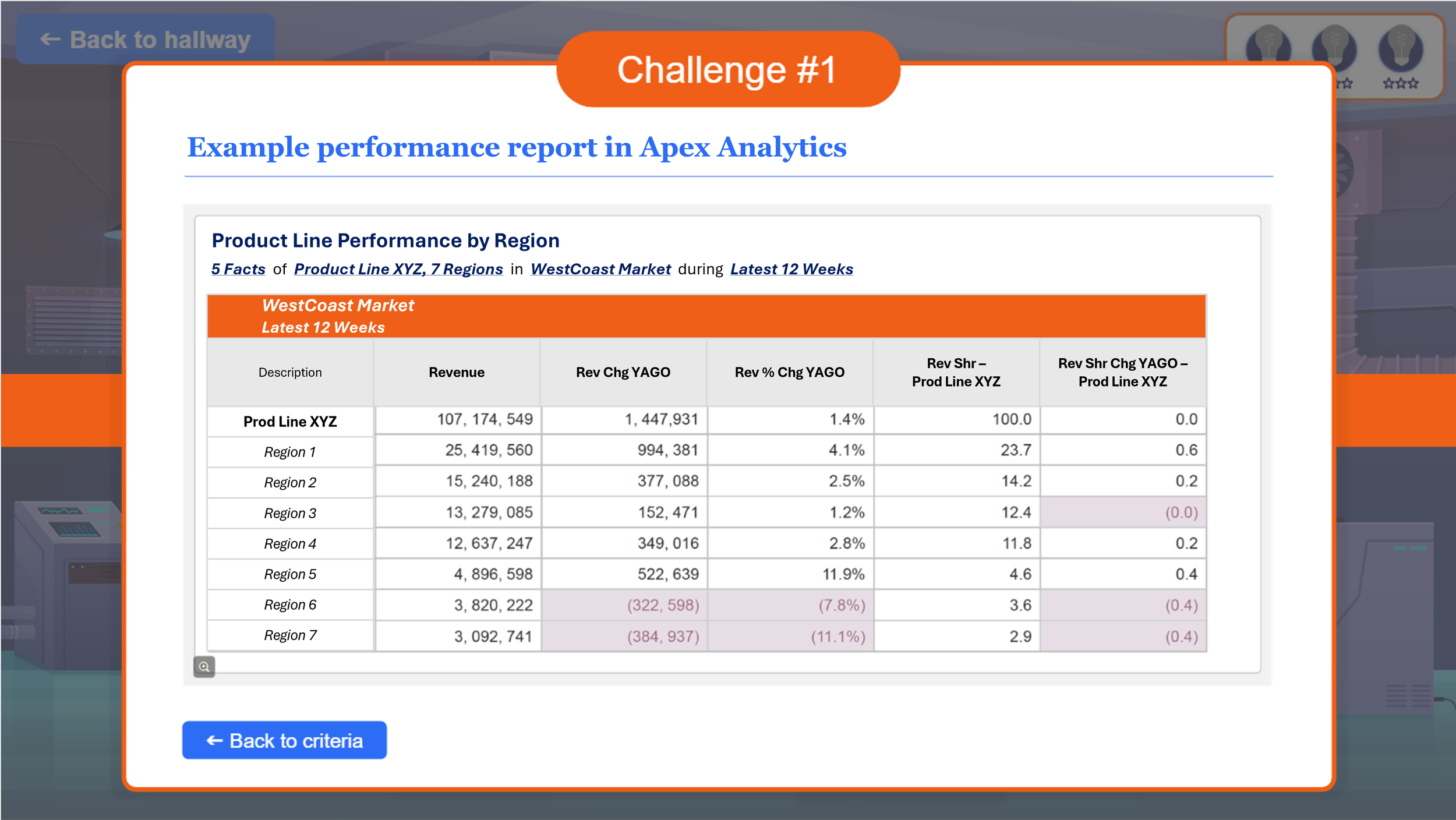

Merrill's First Principles: All tasks anchored in authentic report-building scenarios modeled after real client deliverables. Learners do, not just read.

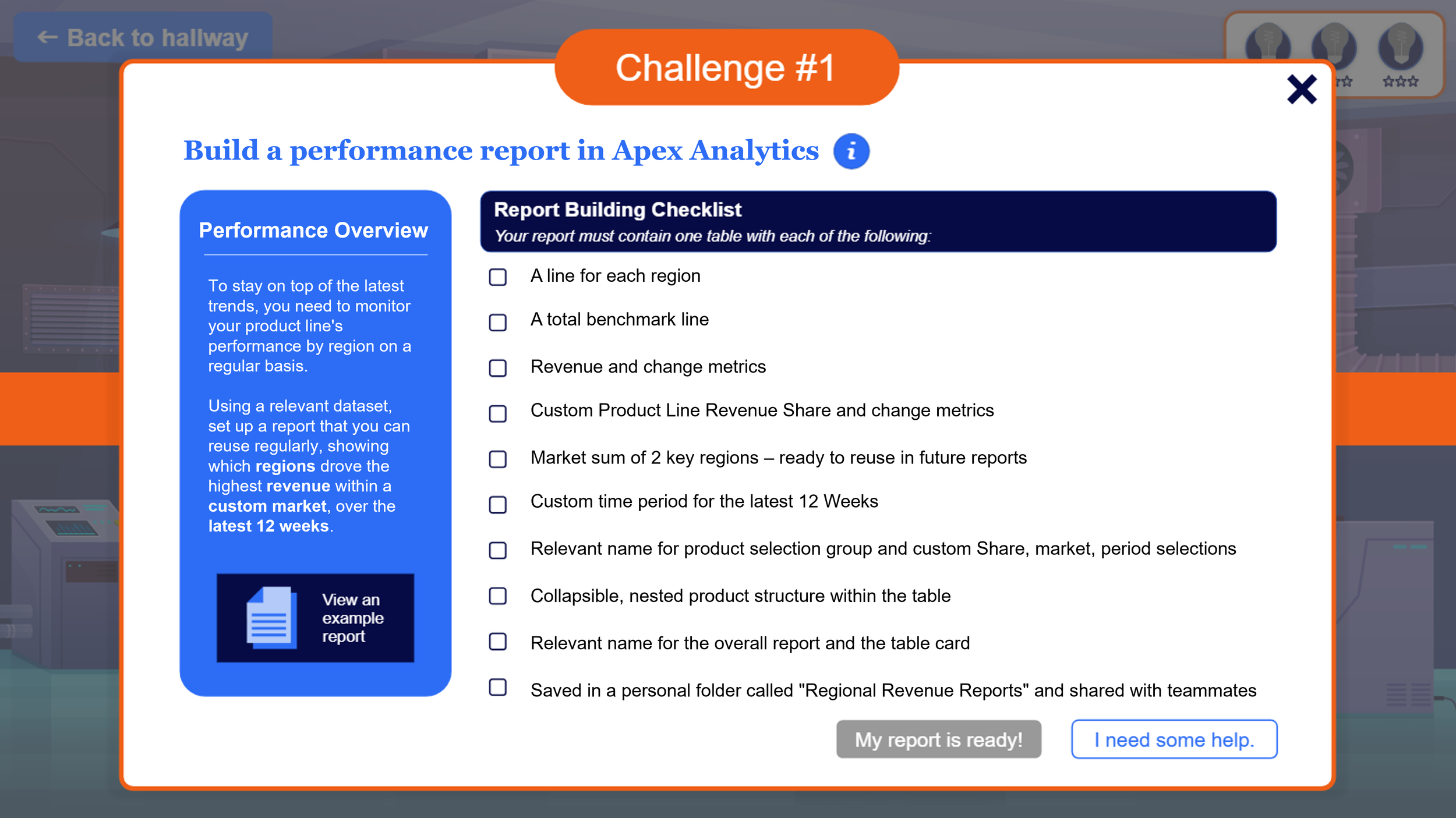

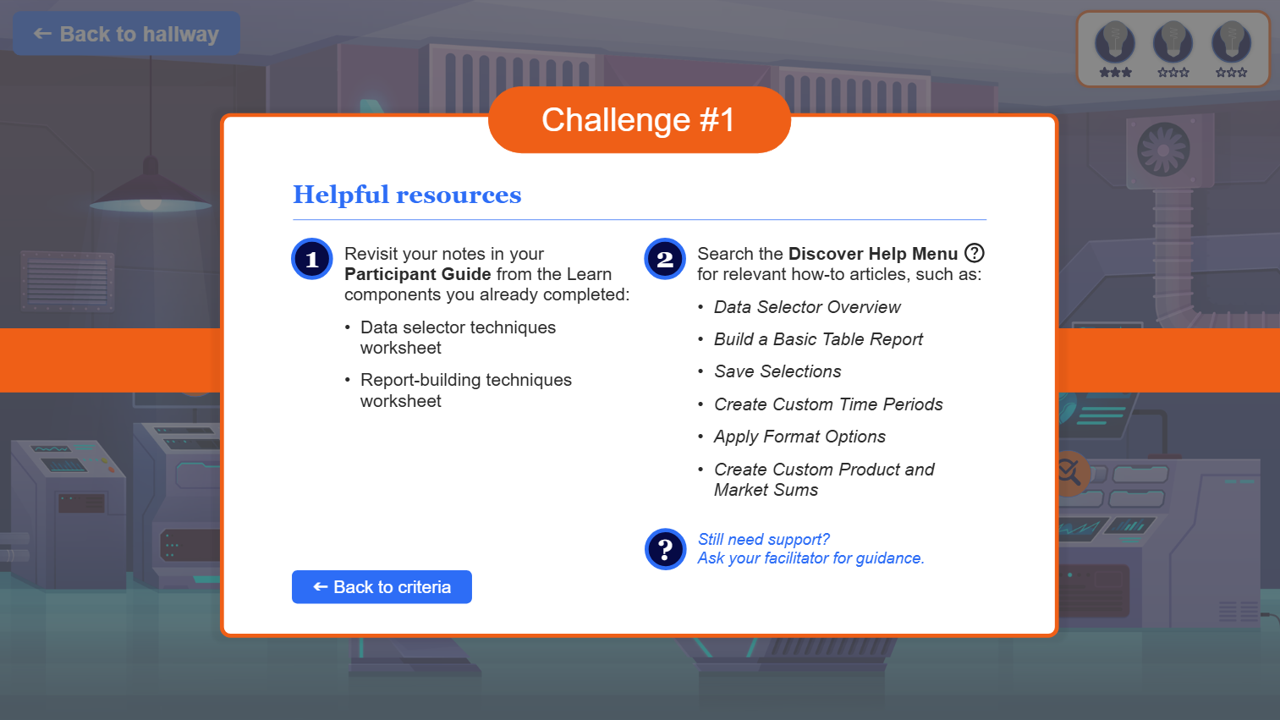

Cognitive Load Theory: Chunked interactions, scaffolded challenge criteria, and embedded resource panels reduce overwhelm at moments of highest complexity.

Constructivism and Social Learning: Team-based clue discovery and collaborative knowledge checks reinforce peer reasoning and shared accountability.

Backward Design: All interactions mapped to certification-level competencies from the outset — every game element serves a learning objective.

Design Highlights

Several design decisions distinguish this experience from standard eLearning:

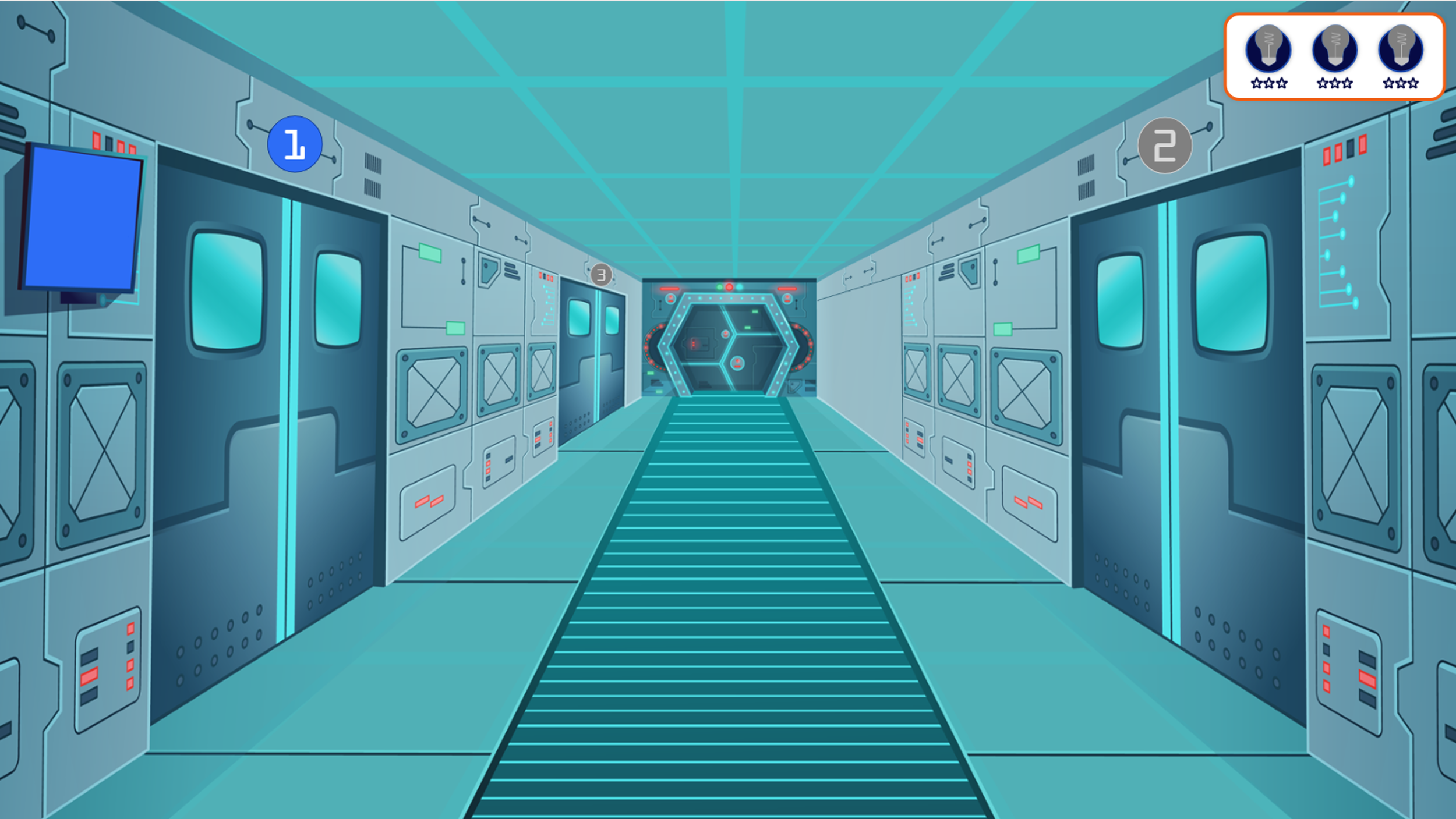

Hotspot-Based Navigation: Rather than a traditional menu or button interface, learners navigate by clicking numbered doors in a hallway scene. This maintains immersion while providing intuitive wayfinding — the space station environment stays intact without breaking to a menu screen.

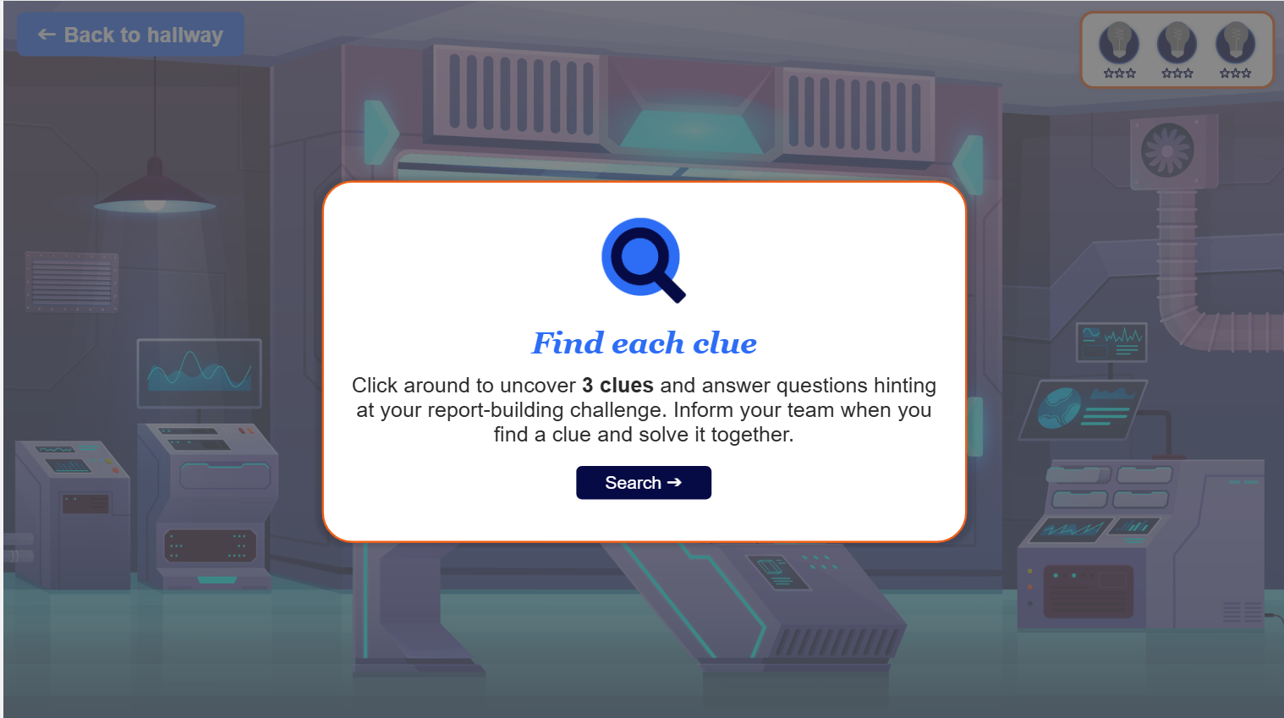

Layered Modal Architecture: Room interactions use a two-step modal sequence: an entry instruction modal primes learners for the collaborative task before exposing them to the clue discovery interaction. This reduces cognitive load at the moment of highest engagement.

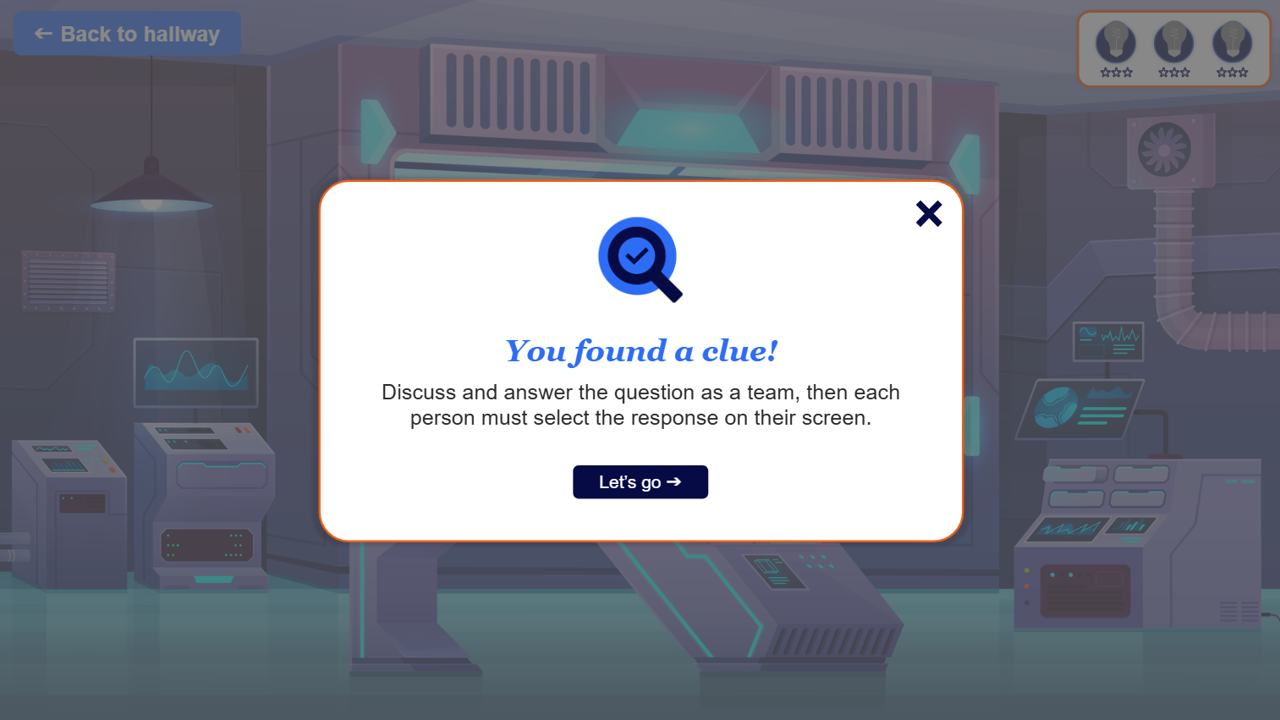

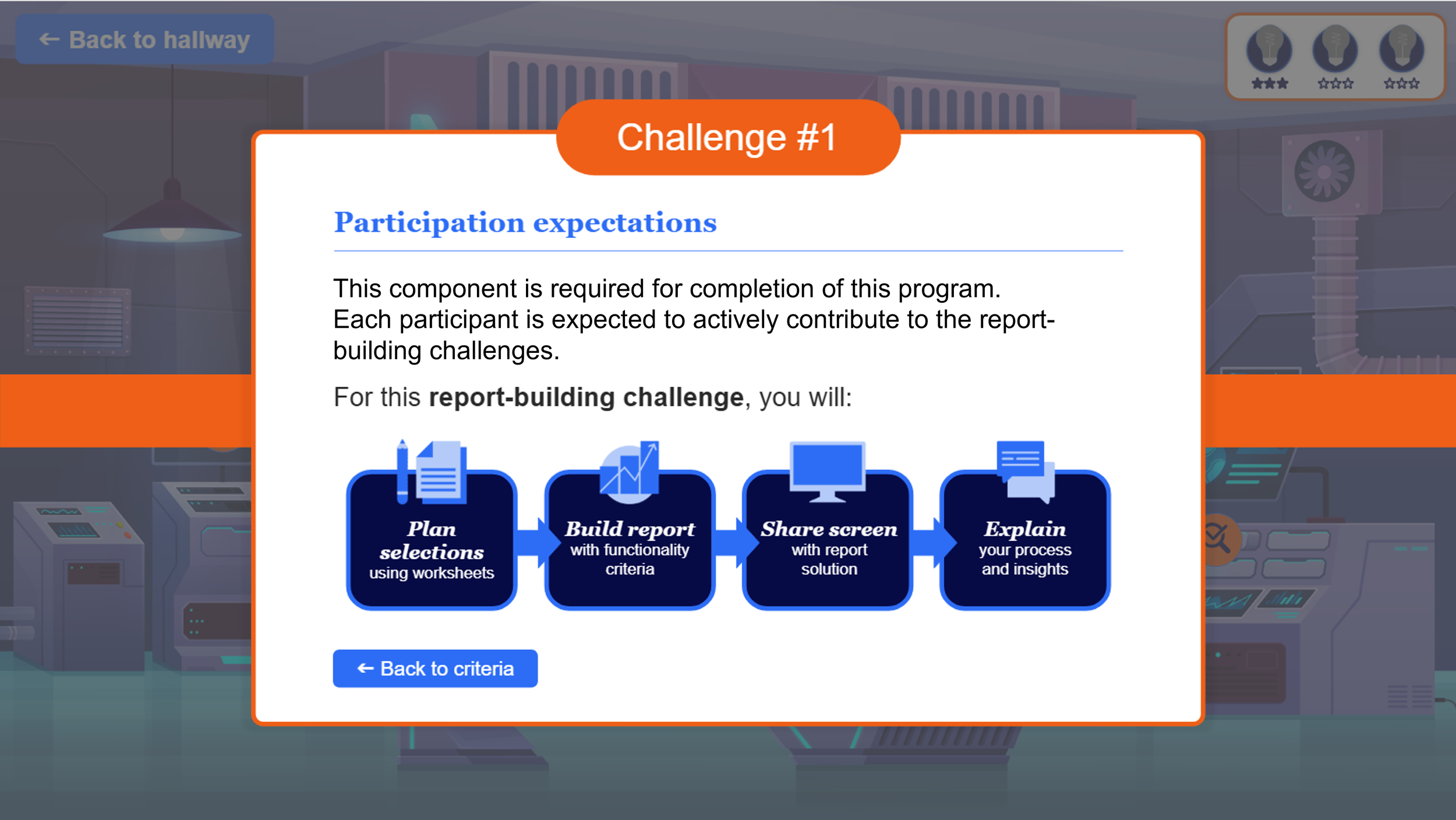

Team Collaboration Built Into the Interaction: Both the clue discovery modals and knowledge check questions include explicit team instructions embedded in the interaction itself — not relying on facilitator prompting to trigger collaboration. Every learner responds individually, but the discussion is built in as a required step.

Gamification as a Learning Signal: The star tracker and badge system are not decorative — each star earned corresponds to a knowledge check answered correctly, and the badge icons update state as challenges are completed. Progress is always visible and always meaningful.

Performance Support Within the Task: The challenge criteria modal includes a checklist, an example report view, participation expectations, and a helpful resources panel — all accessible from within the same interaction without navigating away. Scaffolding is embedded where the learner needs it, not on a separate reference slide.

-

I identified the need for a structured, high-stakes practice environment that could bridge foundational analytics instruction with real-world performance under live conditions. Using Backward Design, I mapped certification-level competencies first, then worked backward to determine what skills and interactions the workshop needed to develop — ensuring every design decision served a measurable outcome.

-

I defined measurable learning objectives aligned to certification competencies and designed the full workshop experience: mission narrative, room-based exploration mechanic, clue-finding sequence, knowledge check structure, and gamification architecture. Storyboards and interaction flows were created for all slides and modal sequences, grounded in Constructivism, Cognitive Load Theory, and Merrill's First Principles.

-

I served as instructional design lead throughout the Storyline build, working directly in the file alongside the developer to direct interaction design, make content-level edits, and ensure the technical execution matched the instructional intent. Built scenario-based activities, knowledge checks, and embedded resource panels aligned to real reporting challenges. Conducted QA for usability, accessibility, and objective alignment.

-

Following development handoff, the pilot was facilitated by trainers with oversight from my manager, who monitored engagement and tracked learner progress through the live sessions. My involvement at this stage focused on supporting the facilitation team with any content or design questions that arose and remaining available for rapid iteration if the pilot surfaced issues requiring adjustments to the build.

-

Formative evaluation was conducted during the pilot phase by the facilitation team, with findings shared back to inform any content or interaction refinements needed post-launch. Pilot results showed 92% learner satisfaction ratings for applicability and engagement. Assessments were aligned using backward design competencies to ensure evaluation measured what the design intended.

Reflection: Project Takeaways

Key Learnings

Designing and piloting the practical application component reinforced the value of grounding learning in authentic, real‑world tasks. The immersive, themed environment — combined with scenario‑based challenges, cognitive scaffolding, and collaborative problem‑solving — created a meaningful bridge between foundational instruction and certification‑level performance. Strong competency gains and facilitator endorsement supported the effectiveness of the design and its readiness for broader rollout.

Enhancement Opportunities

1. Build in earlier, structured user testing cycle prior to pilot.

While the pilot offered valuable insights, future projects would benefit from earlier, iterative user testing during development. Short usability checks with a small learner group — before the full pilot — would help validate interaction patterns, cognitive load, and scenario clarity earlier in the process. This would accelerate refinement and reduce rework later in the cycle.

2. Formalize a more frequent cross-functional review cycle.

Collaboration with trainers and the developer was strong, but future projects could be strengthened by establishing a more formalized review cadence across SMEs, trainers, and technical partners. A recurring touchpoint (e.g., weekly design reviews) would ensure alignment, surface questions sooner, and create a shared sense of ownership throughout the build. This structure would also support smoother handoff if team transitions occur, as they did in this project.